|

|

- Search

| J. Electromagn. Eng. Sci > Volume 21(4); 2021 > Article |

|

Abstract

In this study, we consider real observation scenarios and propose an efficient method to accurately distinguish drones from birds using features obtained from their micro-Doppler (MD) signatures. In the simulations conducted using a rotating-blade model and a flapping-wing model, the classification result degraded significantly due to the diversity of both drones and birds, but a combination of features obtained for longer observation times significantly improved the accuracy. MD bandwidth was found to be the most efficient feature, but sufficient observation time was required to exploit the period of time-varying MD as a useful feature.

Unmanned aerial vehicles (drones) have wide applications, such as military reconnaissance, areal mapping, and environmental monitoring. However, drones can also be used for illegal surveillance or military purposes. Therefore, efficient methods are required to detect drones and to distinguish them from similar targets, mainly birds.

Automatic target recognition (ATR) [1] using radar signals can effectively detect and classify drones. ATR recognizes a target by analyzing the data collected from radar, which uses wideband electromagnetic signals. ATR has been used to classify enemy jets, tanks, and other weapons in warfare. It analyzes a compressed wideband signal to extract two signatures: a high-resolution range profile (HRRP) and an inverse synthetic aperture radar (ISAR) image, which represents the radar cross-section (RCS) distribution information [1–3]. However, these two methods might be inefficient when the size and RCS of the target are similar or if the target is engaged in additional motion during the coherent processing interval (CPI). Drones have similar RCS to that of birds (approximately 20 dBsm), which impedes the classification performed using RCS and HRRP. Furthermore, ISAR images can be significantly blurred due to the time-varying micro-Doppler (MD) effect [4] caused by the rotating blades of a drone or the flapping wings of a bird.

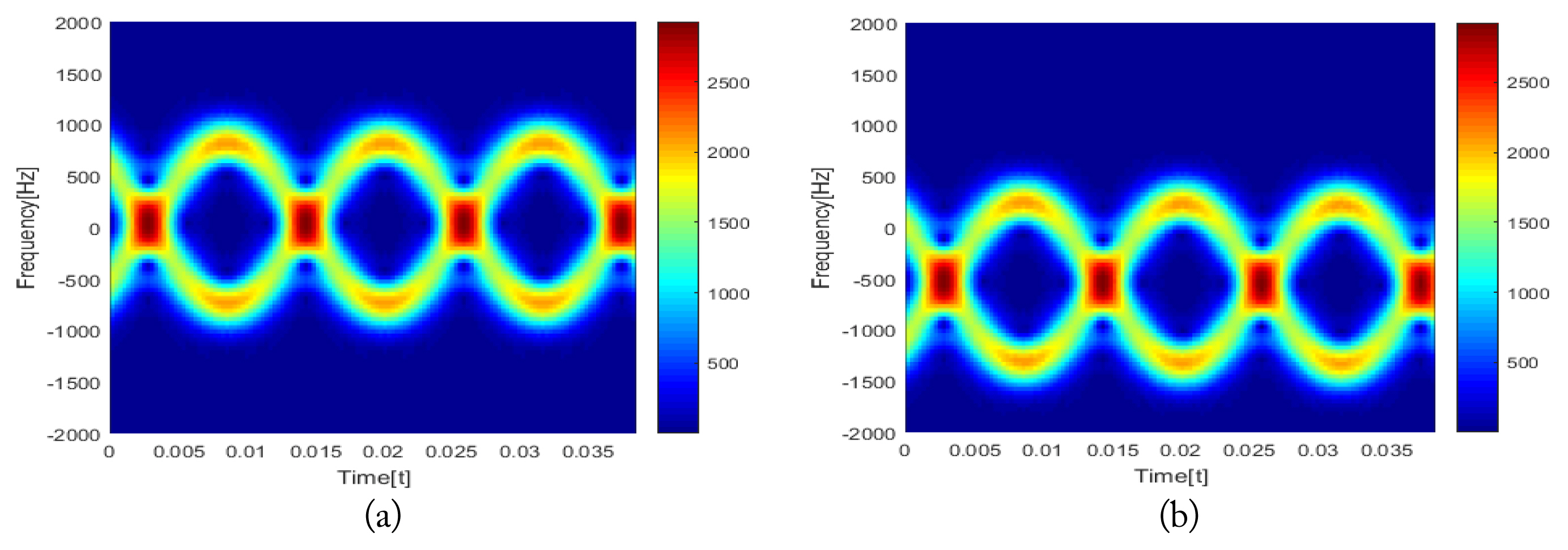

MD represents the time-varying micro-motion of a target [4–8]; thus, MD analysis has high potential for use in ATR. The basic principle of MD analysis is that the rapid mechanical rotation and vibration components of a rigid body cause additional Doppler frequency modulation on the returned radar signal. This additional modulation can significantly blur the ISAR image in the cross-range direction and change the amplitude of the range bin that corresponds to the blade position in the HRRP, thereby degrading the ATR accuracy [9–12]. However, MD can effectively distinguish drones from birds because they both have distinct micro-motions and therefore different MD signatures. A drone blade is engaged in two-dimensional (2D) rotation, so its MD in the time-frequency (TF) domain is symmetric, and the rotation period is well-represented [5, 6]. In addition, the MD bandwidth is relatively large due to the large rotation speed of the blade, and blade flashes appear due to the blade RCS. In contrast, the MD of the bird is caused by wing flapping, which is not symmetric and has a narrower bandwidth than the signature of the drone blade. Therefore, MD can be used to achieve high ATR accuracy to distinguish drones from birds.

In this study, we evaluated how various MD features of a frequency-modulated continuous-wave (FMCW) radar signal [13] affect the accuracy of discrimination between birds and drones. For this purpose, we constructed a radar signal by using a rotating blade and a bird wing composed of a plate and an ellipsoid, each of which have an analytically defined RCS. In addition, efficient features for classifying drones and birds were proposed by expanding the features previously proposed in a conference [14]. Classifications were conducted for various observation scenarios and the usefulness of each feature was analyzed. In addition, the improvement achieved by the feature fusion was analyzed, and the accuracies of a nearest-neighbor classifier (NNC1) [15] and a neural network classifier (NNC2) [16] were compared. The classification results showed that the MD bandwidth was the most adequate for short observation time Tob but that to exploit the time-periodic nature of MD, Tob must be extended. The correct classification ratio Pc ≈ 100% was achieved by using only three features. NNC1 was found to be more appropriate than NNC2 for drone–bird classification.

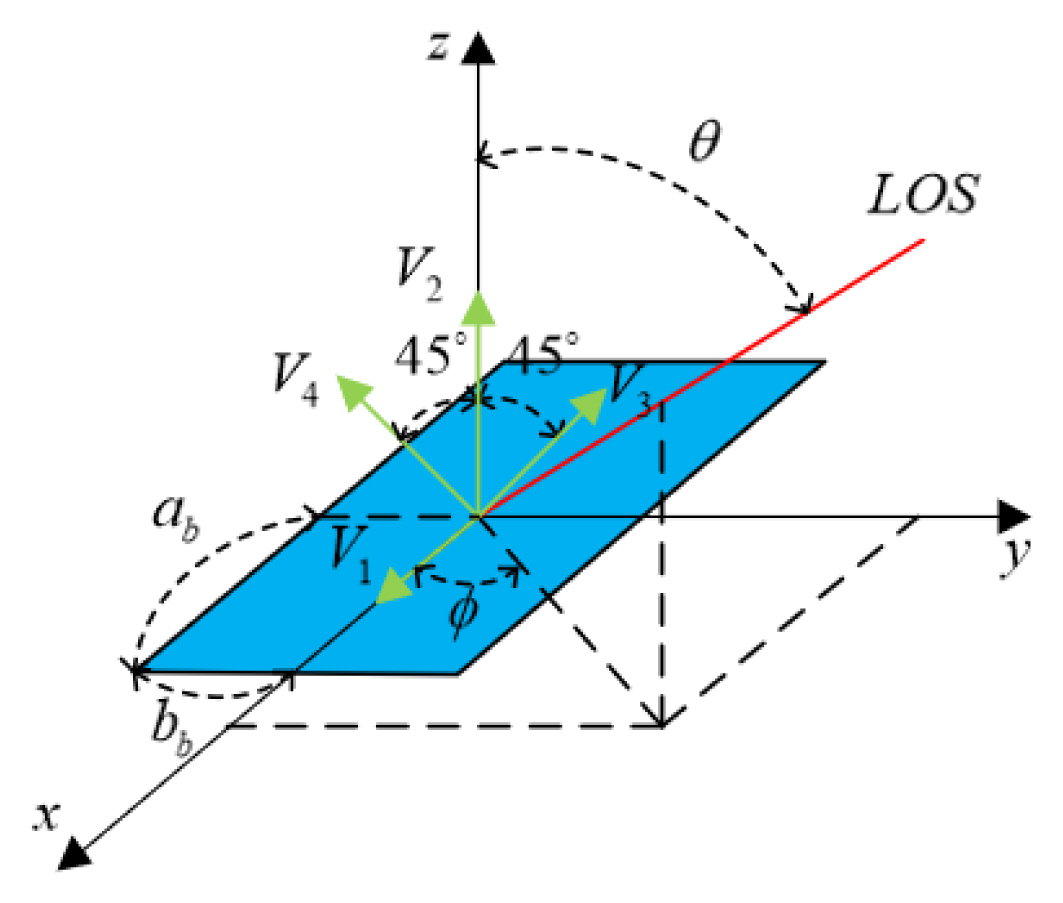

A drone blade can be modeled using two collinear plates of width 2ab and length 2bb, which are rotated around the x-axis, one by ±45° and another by −45° (Fig. 1). The coordinates of a blade tip rotating clockwise at frequency fb around the z-axis are obtained as

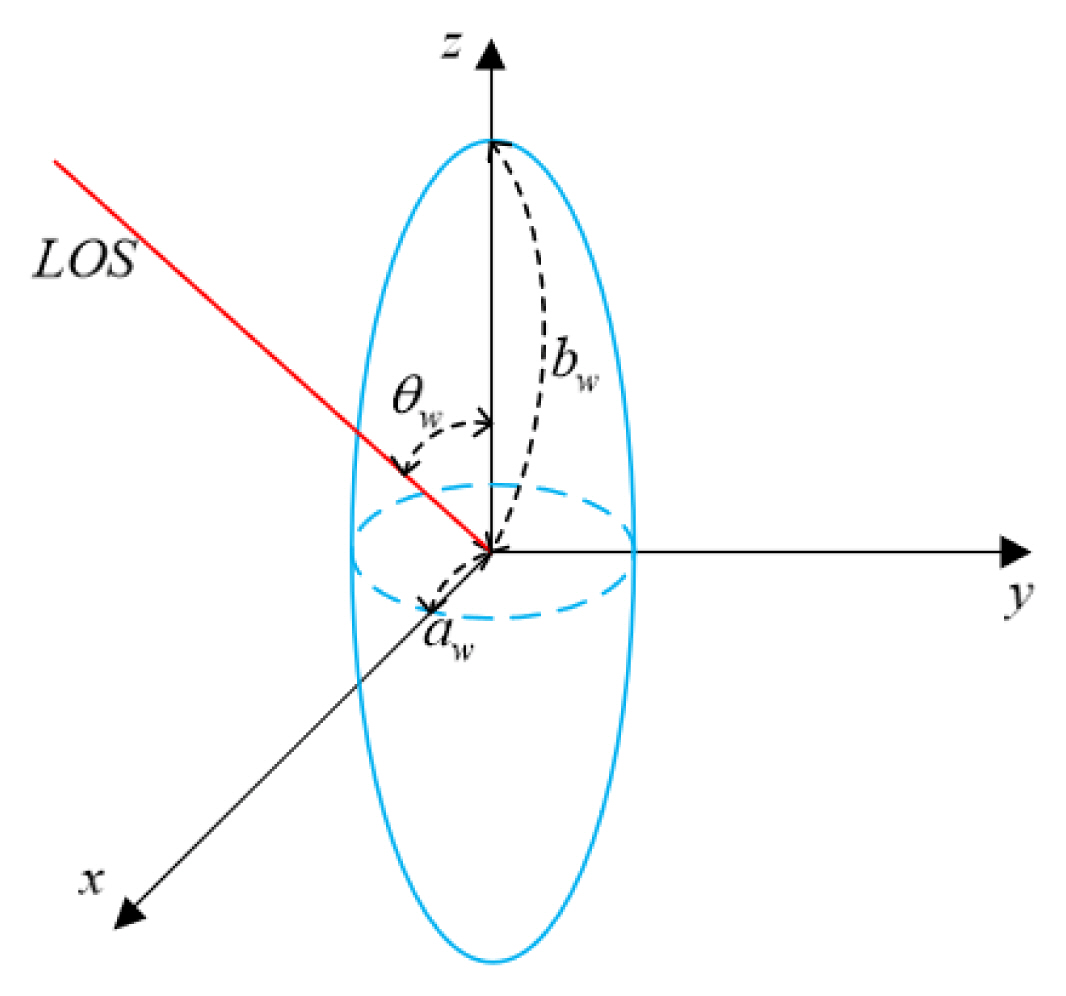

Assuming that a plate is observed at a line-of-sight (LOS) vector composed of an azimuth angle φ and an elevation θ (Fig. 2), the RCS of the flat plate rotated by 45° is analytically given as [13]:

where

with

Using the same R(t) as that in (1), Vi for i = 1, 2, 3, 4 change accordingly as

so the RCS of the rotating blade can be obtained at each LOS by using (2).

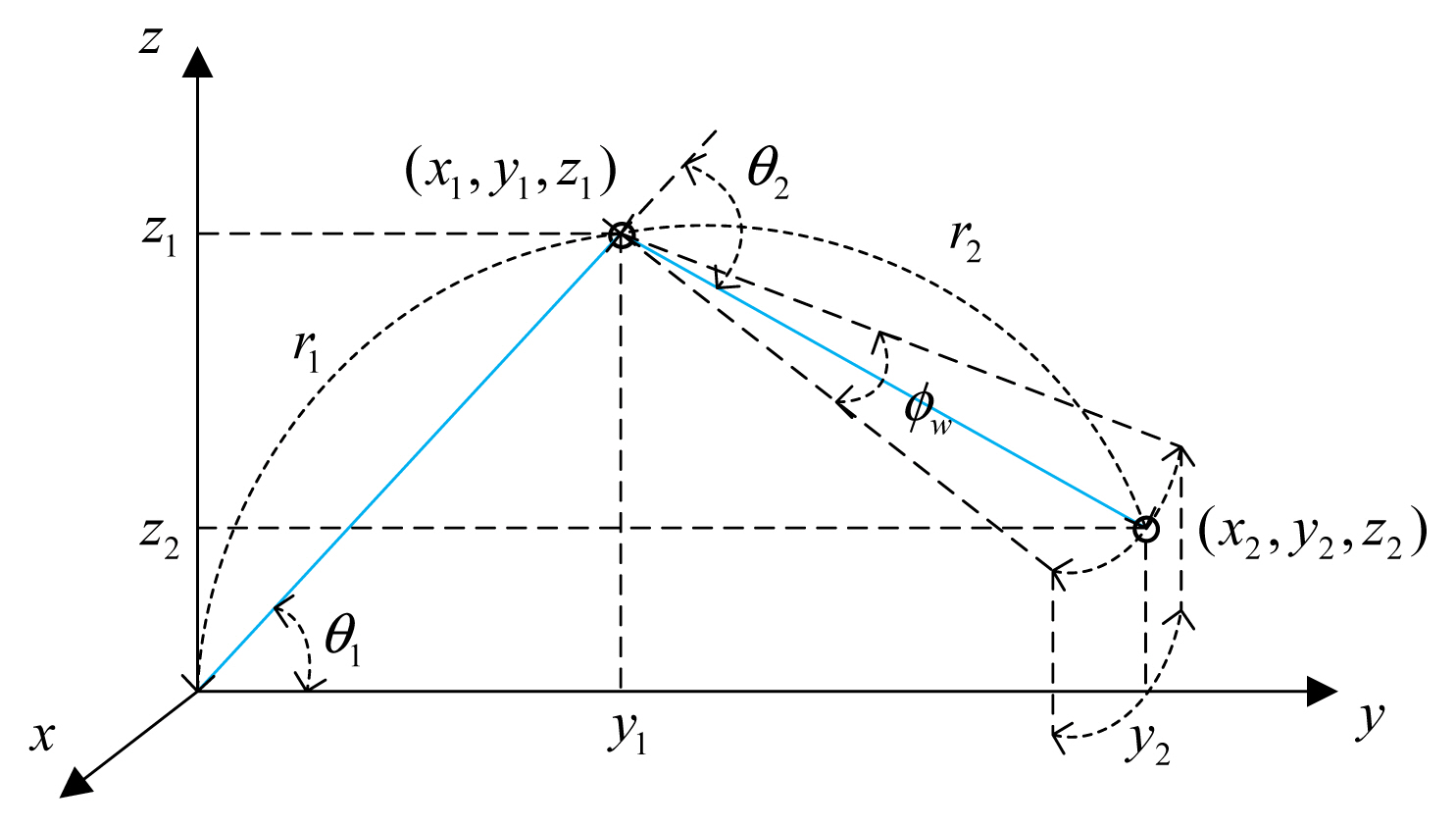

The bird motion was modeled using the upper and back muscles of the wing (Fig. 3). With the same sinusoidal frequency fw, the analysis assumed that the lower muscle tip at (x1, y1, z1) rotated by θ1 = 40°cos(2πfwt) + 15° and the upper muscle tip at (x2, y2, z2) rotated by θ2 = 30° cos(2πfwt) +40°. The forward–backward movement of the upper wing was modeled by rotating (x2, y2, z2) by φw = 20°sin(2πfwt)+ 40° in the x-y plane. Thus, the two-muscle position can be expressed as

where r1 and r2 are

The wing muscle can have various shapes but our main concern is the MD analysis; thus, the muscle was modeled as an ellipsoid (Fig. 4). As in the case of a flat plate, the RCS of the ellipsoid observed at an observation angle θw with respect to the major axis is analytically given as

where aw and bw are the lengths of the minor and major axes, respectively [13]. Assuming that the vector of the major axis of the lower and upper muscles is p̄i (i = 1 for the lower and 2 for the upper muscle), θw is calculated as

where

are the centers of muscles 1 and 2, respectively.

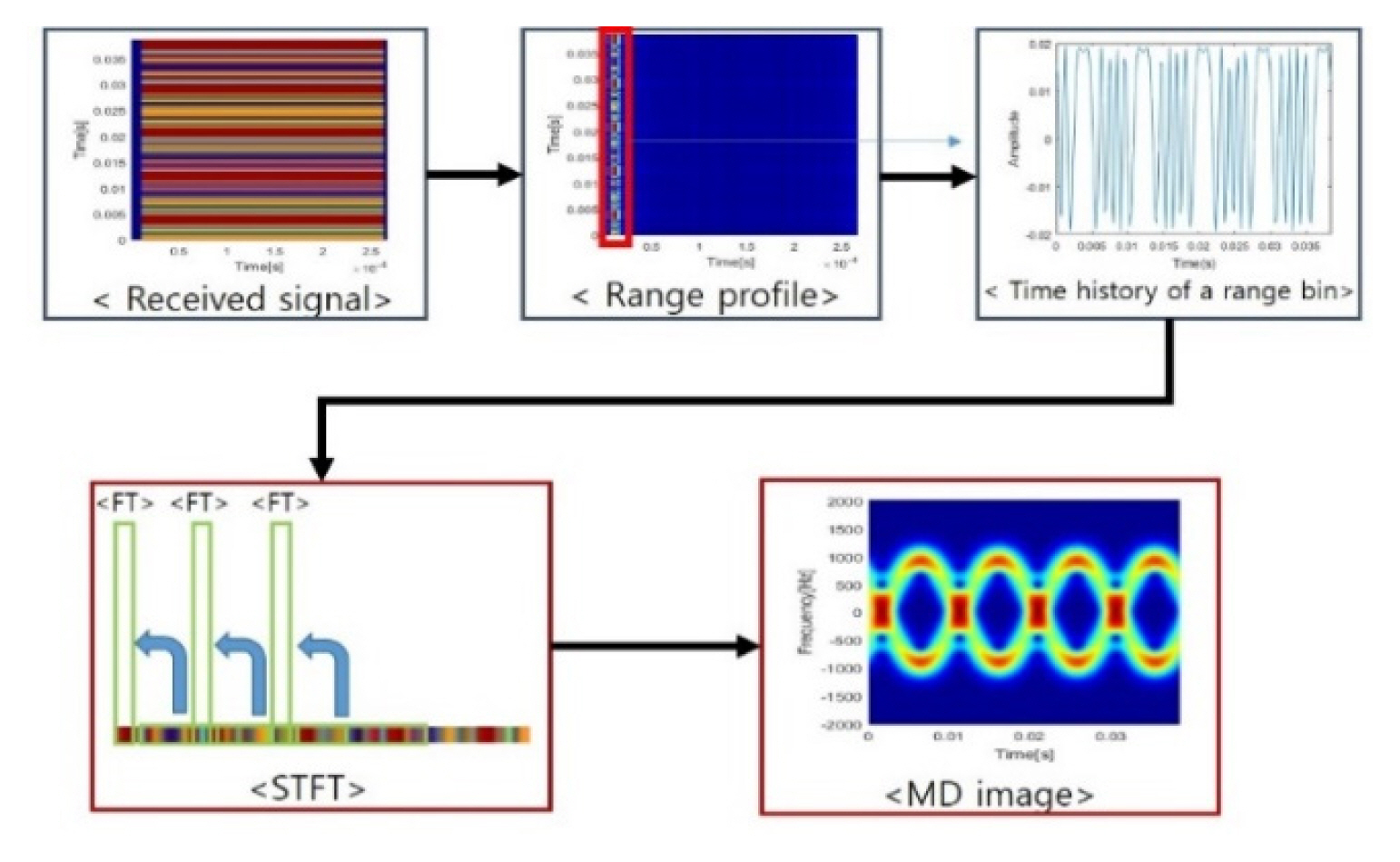

In this study, we used an FMCW signal to form the received radar signal. FMCW is a widely used, low-cost and low-power radar system that can be implemented in a small system and is widely used for short-range detection and imaging. The FMCW radar continuously transmits and receives a chirp signal within a given period Tchirp [13] (Fig. 5).

The transmitted chirp signal, which is repeated at intervals Tchirp, is expressed as

where f0 is the minimum frequency and Kr is the chirp rate (Fig. 5). The bandwidth B, Kr, and Tchirp are related as

The received chirp from a scatterer at r is given by

where the time delay τ = 2r/c and c is the speed of the light. For the bistatic observation scenario, 2r is replaced by rt + rr, where rt is the distance from the transmitting radar to the target and rr is the distance to the target receiving radar.

Dechirping the received chirp yields an intermediate signal:

where Krτ is the beat frequency:

where the residual video phase Krτ2/2 has been removed.

Assuming that a target with an RCS A0 is observed for a CPI TCPI, (11)–(15) are used for each slow time ts = kTchirp during TCPI. Thus, the received signal can be expressed as

where R(ts) is the distance to the target at ts. Dechirping changes s0(t, ts) to s0d(t, ts) as

If the third term in the above equation is ignored [17], a Fourier transform (FT) from the t-domain to the f-domain yields a dechirped signal in HRRP at ts (i.e., sr(f, ts):

where pr(·) is a sinc function formed by a rectangular window of length Tchirp. The down-range location of the target can be calculated by determining the peak of pr(·).

The signal sMD(ts) = sr(Krτp, ts), where τp is the time delay of the signal reflected from the target. sMD(ts) is the time-varying signal of the target caused by the micro-motion and sampled at ts. However, sMD(ts) is time-varying and thus should be analyzed in the TF domain rather than the frequency domain. In this study, we used the short-time Fourier transform (STFT), which is simple to implement and free from cross-term interference. STFT transforms sMD(ts) to MD [18, 19]:

where fs is the frequency and W is the window function. To reduce the sidelobe caused by the rectangular window, we used the Hamming window. In discrete form, (22) is expressed as

where k is the time index, L is the window length, p is the sampled time index, and q is the sampled frequency index.

The following features are proposed in this paper (Table 1).

The frequency frcs of the MD signal variation in the time domain represents the rotation frequency of the drone blades; thus, frcs is used as a feature and is defined as

where rcs (τ) = |sMD(τ)|.

The frequency fh of the MD change in the time domain differs considerably between the drone and the bird; thus, this change is used as a feature. To obtain fh, the frequency-domain image Iff is obtained by the FT of the MD image in (23) in the time domain as

where FTq is the FT in q for each p. Then, fh is obtained as

Due to the difference in the rotation speed, the MD bandwidth fv is used as a feature and can be defined as

where fmax(s(k), γ) is the maximum k corresponding to a value larger than γ% of the maximum s(k).

The MD frequencies in the positive and negative directions differ between drones and birds. Drones have symmetric rotation, whereas birds do not; thus, the 2D correlation cor± of ±MD frequencies is used as a feature. The correlation cor± is defined as

where X and Y are the MD images clipped from Iff for positive and negative MD frequencies, respectively; X̄ and Ȳ are the averages of X and Y; and V(·) is the variance.

Drone blades rotate symmetrically in the x-y plane, so the monostatic/bistatic MD images of MD drones are similar, whereas bird wings flap in the z-y plane. The correlation cormb obtained using the monostatic and bistatic images is used as a feature:

where Iff,m and Iff,b are monostatic and bistatic Iff, respectively, and Īff,m and Īff,b are the averages of Iff,m and Iff,b.

The MD of the drone is widespread; thus, the peak value of the normalized MD frequency is small. However, the MD of the bird is narrowly spread; thus, the peak is large. Therefore, the peak value fpk,v is used as a feature:

where

Z v [ n ] = ∑ q | I f f [ p , q ] |

fpk,h is used as a feature and is defined as

where

Z h [ n ] = ∑ q | I f f [ p , q ] |

Similarly, the peak value fpk,2D in the normalized Iff is

where

Entropy is a good measure of disorder [20]. It increases with the width of a profile but not with a narrowly distributed profile. Therefore, the entropy entv of Zv is used as a feature and can be expressed as

The entropy of Zh is also used as a feature:

Similarly, ent2D, as the 2D entropy of IN, is used as a feature:

For the convenience of combining features, they are defined as fi for i = 1, 2, …, 21 (Table 1).

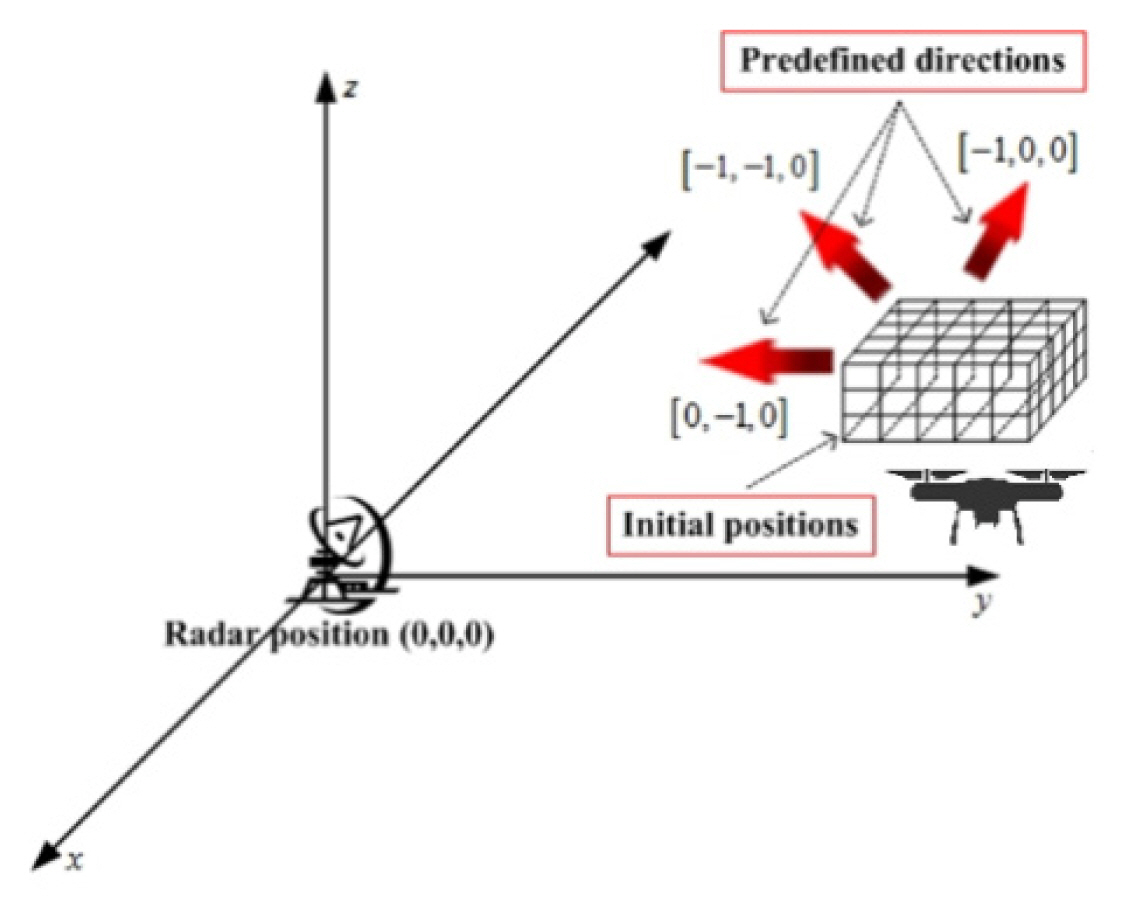

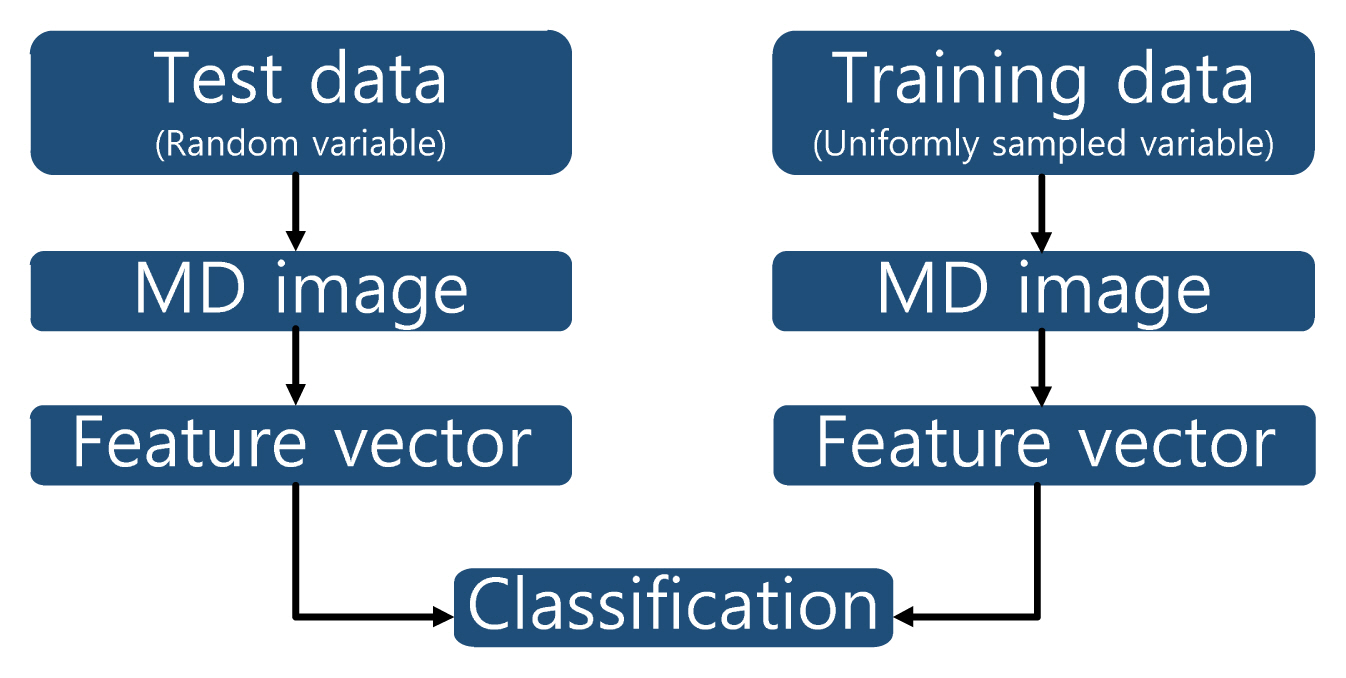

To obtain a classification ratio of approximately 100%, the training database should be constructed for all combinations of azimuth and elevation angles. In addition, the direction of flight and frequencies of blade rotation and wing flapping should be considered; thus, the computation time and memory space are huge. To circumvent these problems, we constructed a training database for flight scenarios [2] (Fig. 7). We uniformly sampled the three-dimensional space (training space) and assumed that the target flew at a given velocity in a given direction, starting from each given grid point. Then, the MD image was obtained and stored in the training database.

To consider the variation in flight direction and MD frequency, the MD image was obtained at each grid point by uniformly sampling the flight direction within ±θt in increments of Δθt. In addition, for each flight direction, fb of the drone blade was sampled between fb,1 and fb,2 in increments of Δfb, and fw of the bird wing was sampled between fw,1 and fw,2 in increments of Δfw. In the bistatic observation scenario, a receiving radar was located at a distance rb from the transmitting radar, and the training database was constructed using the same grid points and motion parameters.

The classifiers used in this study were NNC1 and NNC2, and their classification accuracies were compared. NNC1 uses a simple Euclidean norm as [15]

with

where χ̄u is a test vector and f̄i is a training vector that belongs to the ith class. The class i that yields the minimum Euclidean norm is the class that includes χ̄u.

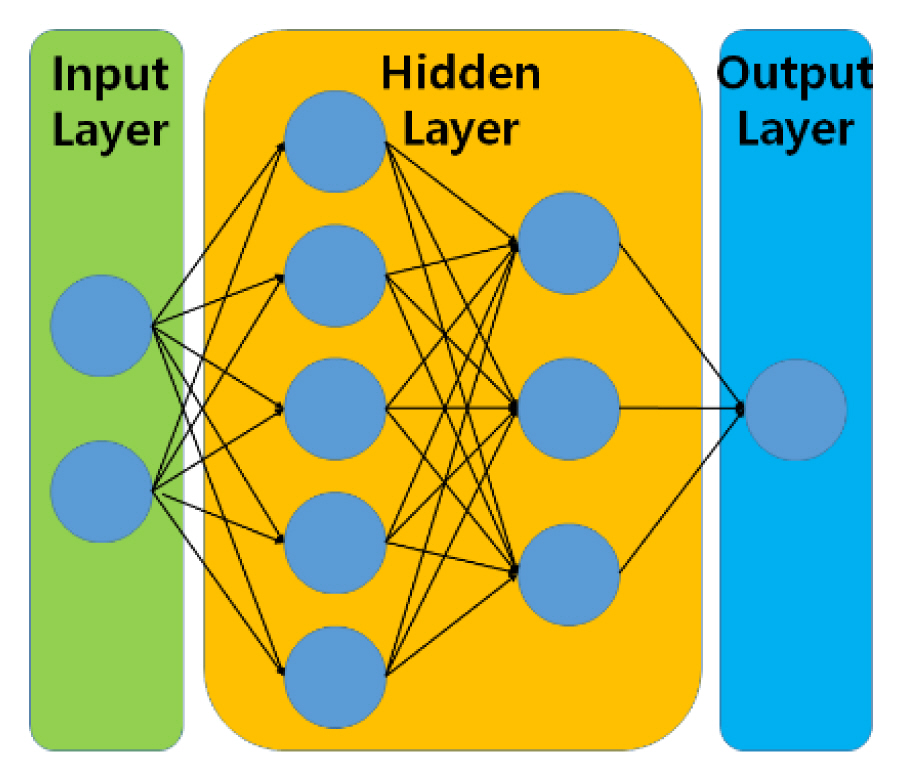

As NNC2, we constructed a neural network composed of two hidden layers: the first layer had five nodes and the second had three [16] (Fig. 8). Training was conducted using a backpropagation algorithm; the output was set to “1” for drones and “0” for birds. Because the decrease in the training error after 5,000 iterations was slight, 10,000 iterations were conducted to prevent time consumption. The classification was performed by following the general target recognition procedure (Fig. 9). When the test data were obtained, the target location and motion parameter were not known; thus, the target was randomly positioned in the training space, and the micro-motion was modeled using a parameter that was randomly set within the range of that parameter in the training data. Then, an MD image was obtained following Fig. 6. Classification was conducted using the defined features (Table 1), and the importance of each feature and the classification accuracy of the two classifiers were evaluated.

Simulations were conducted using an X-band FMCW radar with center frequency = 9.6 GHz and pulse-repetition frequency (PRF) = 4 kHz, which are the specifications of a tracking radar that we are developing. To analyze the importance of the features that represent the periodicity of the MD signal, observations were conducted for two values of TCPI. The first was 0.0385 seconds, which is the standard dwell time of the scanning radar, and the second was 1.001 seconds, so that a long observation time could exploit the periodicity of the MD signal. The bandwidth was assumed as B = 150 MHz; however, we reduced it to 0.150 MHz for fast computation, because the MD image is not relevant to range resolution but sampling the target using PRF, which exists in a range bin (Fig. 10).

We assumed a normal-sized drone composed of four wings, each of which had four blades rotating at a frequency between fb,1 = 40 Hz and fb,2 = 50 Hz. The blade dimensions were selected as ab = 8 cm and bb = 1.5 cm (Fig. 2). For the bird, we assumed a wing span = 60 cm (i.e., aw = bw = 15 cm) at a flapping frequency fw,1 = 4 Hz or fw,2 = 7 Hz. As mentioned above, the MD of a bird is very different from that of a drone (Fig. 11). Due to the asymmetric motion of a bird, its MD is not symmetric and blade flashes are not observed. In addition, the period of MD is much smaller than that of the blade.

In constructing the training database, the training space was divided into 250 subspaces by using a grid. The range on the x-axis between 300 and 1,500 m was divided into ten equal intervals; the range on the z-axis between 10 and 1,000 m was divided into five equal intervals; and the azimuth angle between −45° and +45° was divided into five equal intervals (Fig. 12(a)). At each grid point, the flight direction was set between −θt = −30° and +θt = 30° in increments of Δθt = 12°. For each flight direction on each grid point, the MD image was obtained by varying fb and fw in increments of 1 Hz. The test data were randomly obtained. Identical simulations were conducted for bistatic observations with a receiving radar located at 1,000 m on the y-axis from the transmitting radar at the origin.

At a random position in the training space (i.e., 300 m ≤ x ≤ 1,500 m, 10 m ≤ z ≤ 100 m, 0° ≤ azimuth angle ≤ 45°), the target was flown in a random direction at −30 ≤ θt ≤ 30° with a random micro-motion frequency (Fig. 12(b)). For TCPI = 0.0385 seconds, 2,500 test images were used per target; for TCPI = 1.001 seconds, this number was decreased to 300 due to the increased computation time. As in the training phase, the bistatic test data were obtained using the same parameters. The classification accuracy was expressed as the correct classification percentage:

where Mc is the number of correct classifications and Mte is the number of test samples.

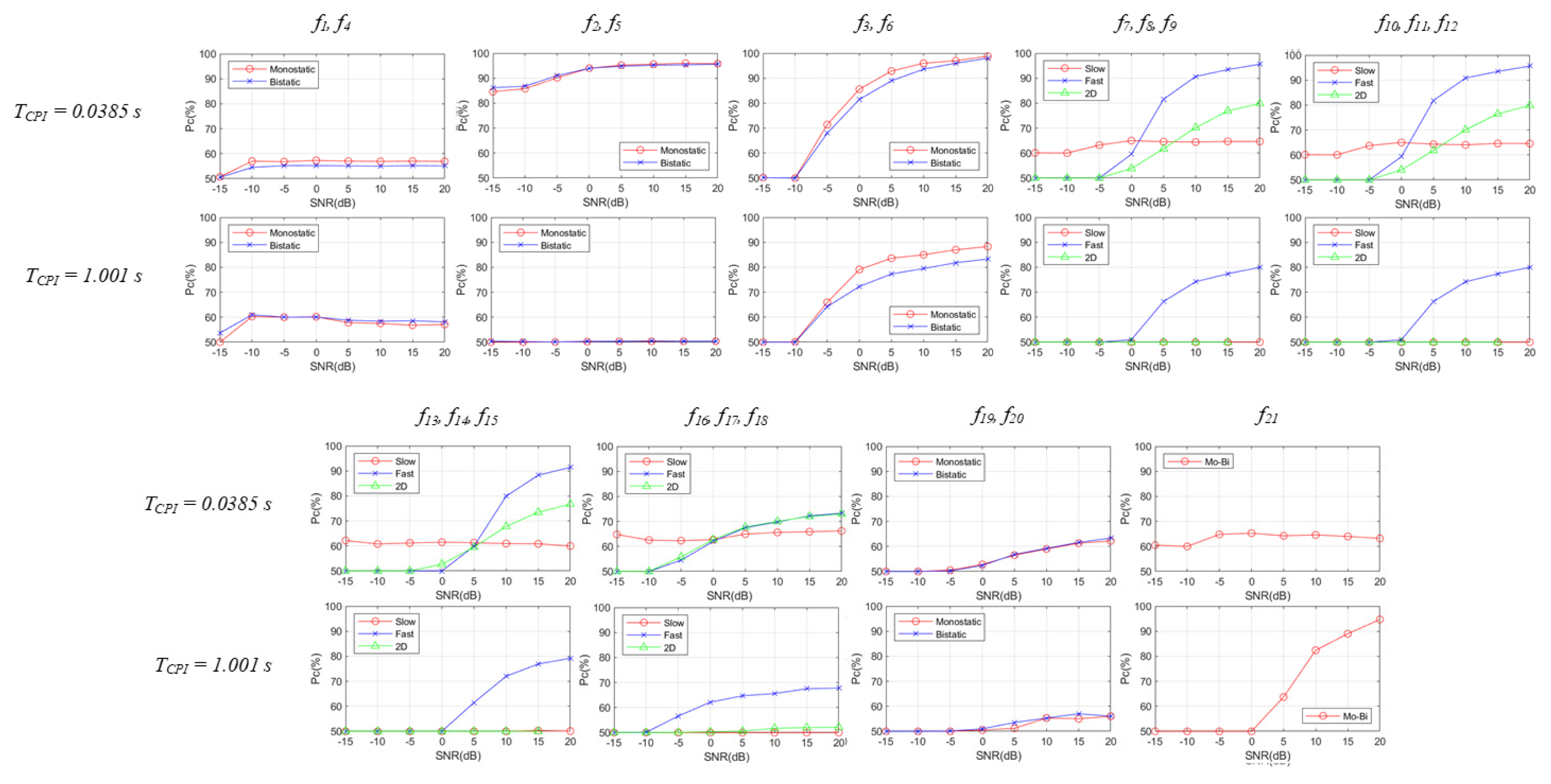

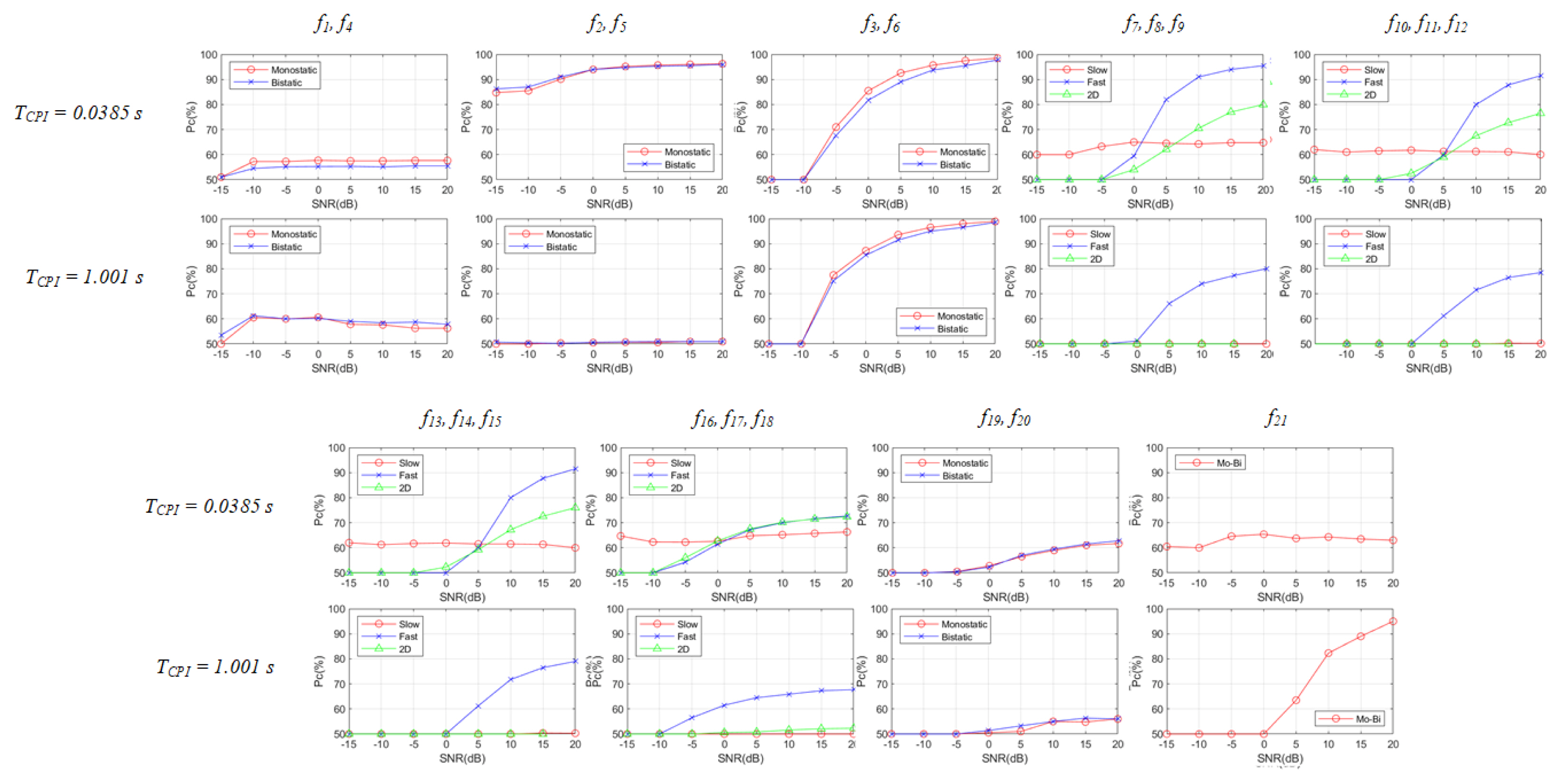

Classifications were performed assuming that the targets were stationary with the random micro-motion parameters at a randomly selected position. The signal-to-noise ratio (SNR) was varied from −15 to 20 dB in increments of 5 dB, and NNC1 was used as the classifier. The Pc values for both TCPI were proportional to the SNR, except for f21 for TCPI = 0.0385 seconds. Pc was approximately 100% at SNR = 20 dB (Fig. 13). Comparing the features for each TCPI, the highest Pcs at low SNRs were obtained using f2 and f5 (i.e., MD frequency fh for monostatic and bistatic scenarios), which is a rather unexpected result. These results are attributed to the fact that for the short CPI, the frequency of MD change of the bird is approximately 0 Hz due to the slow variation in bird flapping, whereas the blade rotation is fast enough to yield a certain value of fh. As a result, Pc was high.

Comparing the results obtained at the two TCPI values, the features that exploited the MD periodicity and the projected sum onto the horizontal axis (i.e., f1, f7, f10, f13, f16) were significantly improved at TCPI = 1.0 second because the increased observation time allowed the periodicity to be represented by FT along the time domain (= horizontal axis). f2 and f5 are also the features representing MD periodicity; however, the Pcs of these vectors for TCPI = 1.0 second were lower than those for TCPI = 0.0385 seconds because of the large zero–nonzero difference.

Likewise, f21 increased for TCPI because the increased observation time represented the MD image in detail. The entropy-related features (f7–f12) yielded a high Pc value but were sensitive to noise.

To represent a real observation scenario, the first set of classifications was performed to study the effect of velocity v and acceleration a of a moving target by randomly selecting 0 ≤ v ≤ 10 m/s and 0 ≤ a ≤ 10 m/s2.

As v of the rigid body shifted the MD and a tilted the MD upward or downward, the test MD image was significantly different from the training image (Fig. 14). Other simulation conditions were the same as those mentioned in Section III-2-1.

The classification results demonstrated that v and a should be accurately estimated and compensated for (Fig. 15). Compared to the result of the stationary target (Fig. 13), Pc of the features decreased significantly. Due to the change in MD frequency caused by v and a, the RCS frequency varied; thus, f1 and f4 yielded poor Pc. In addition, Pc of the features (f7, f10, f13, f16) using the horizontally projected sum decreased considerably due to the tilted MD image in the TF domain; the amount of decrease was larger at TCPI = 1.001 seconds than that at TCPI = 0.0385 seconds because the tilt of the MD frequency in the TF domain was larger at TCPI = 1.001 seconds than that at TCPI = 0.0385 seconds. Thus, the test Iff became totally different from the training Iff s after FT on the horizontal axis.

TCPI affected several Pc values. At a short TCPI, the Pc values were high because these features are nonzero for a bird and close to zero for a drone. However, at a long TCPI, the frequency of the MD change affected by v and a was represented by FT, so Pc decreased to approximately 50%. The MD bandwidth (f3 and f6) was less affected because the MD bandwidth of the drone was much larger than that of the drone. The entropy obtained using the projected sum onto the vertical axis (f7 and f10, “fast”) was less affected by v and a than the MD bandwidth because the entropy is not determined by the MD bandwidth but by the overall distribution. The Pc values of f19 and f20 were also affected by v and a, and those of f21 increased for TCPI due to the similarity between the monostatic and bistatic MD images for the increased observation time.

The second set of classifications was performed to study the effect of multiple targets because birds and drones may fly in groups with similar motion parameters. The variables v and a were assumed to have been perfectly compensated for, the number of targets was randomly selected between 1 and 5 inclusive, and the classifications were conducted using the same parameters as those used in the first classification.

Because the effects of v and a were completely removed, Pc (Fig. 16) was slightly lower than the values shown in Fig. 13. The Pc values for both TCPI values were proportional to SNR except for f21 for TCPI = 0.0385 seconds. f2 and f5, which represent MD frequency fh, yielded the highest Pc at low SNRs due to zero and nonzero MD frequencies. f1, f7, f10, f13, and f16, which represent the projected sum onto the horizontal axis, improved significantly for TCPI = 1.0 second due to the increased observation time. f2 and f5 for TCPI = 1.0 second yielded lower Pc than those for TCPI = 0.0385 seconds because of the large zero–nonzero difference. The entropy-related features (f7–f12) yielded high Pc but were sensitive to noise, and f21 increased for TCPI due to the increased observation time.

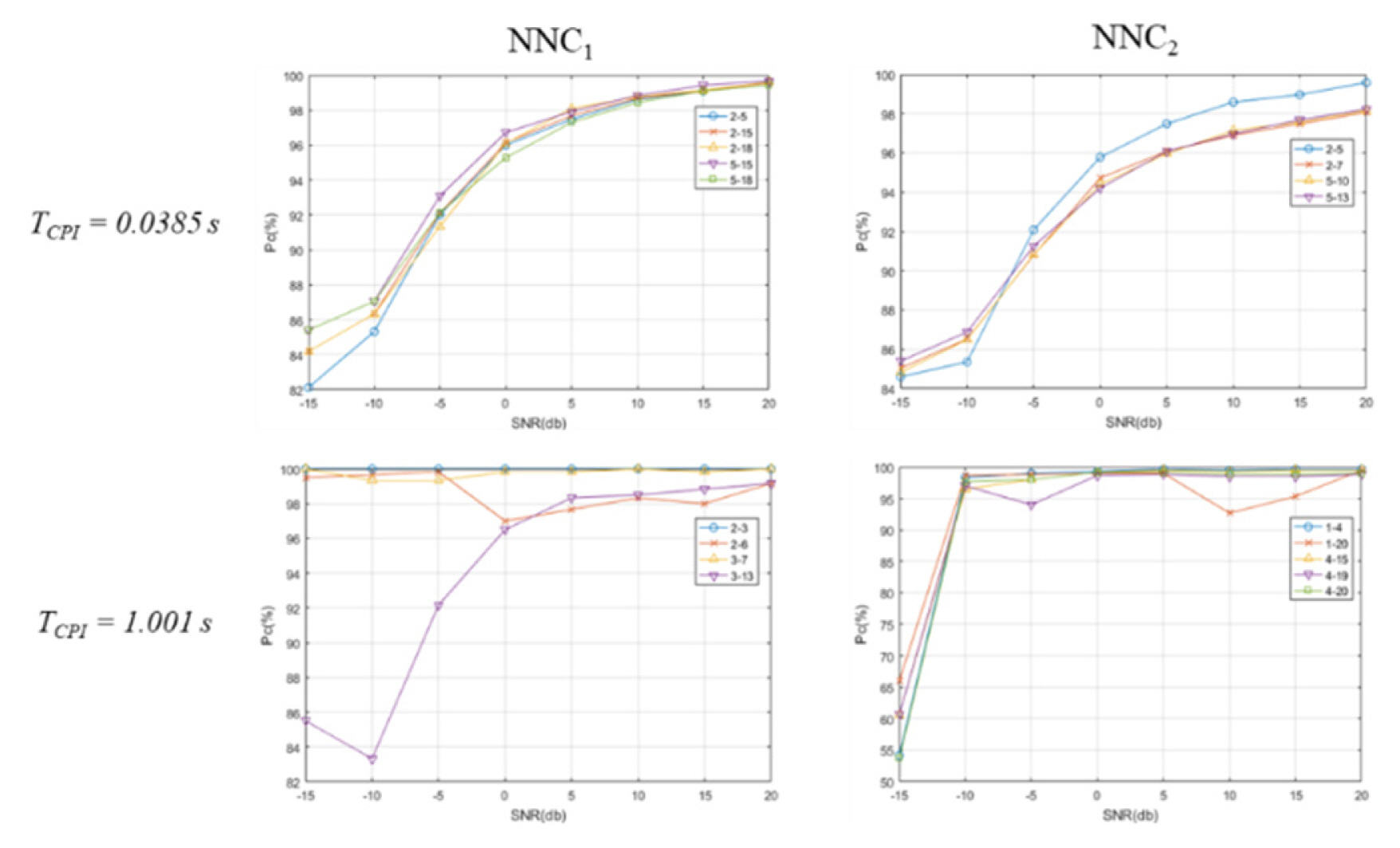

To demonstrate the improvement in feature fusion, classifications were conducted by fusing the features. Combinations of 21 features were used as the feature vector, and Pcs of NNC1 and NNC2 were compared for various SNRs using the training and test data same as those presented in Section III-2-1. The number of combinations was large, many of which had Pc ≈ 100%, so combinations of two and three among the 21 features were analyzed, and the optimal combinations that yielded Pc ≈ 100% were found.

For combinations of two features, and considering the sum of Pc at SNR = 0 dB and 20 dB, the best five combinations obtained Pc ≥ 95% for SNR = 0 dB and Pc ≥ 98% for SNR = 20 dB (Fig. 17). As in the classifications conducted above, Pc was proportional to SNR. For TCPI = 0.0385 seconds, NNC1 using f2 + f5, f2 + f15, f2 + f18, f5 + f15, and f5 + f18 yielded high Pc ≈ 100% for SNR = 20 dB, and for NNC2, f2 + f5, f2 + f7, f5 + f10, and f5 + f13 were more effective than other features. Comparing NNC1 and NNC2, the Pc of NNC1 was higher than that of NNC2. This is because the designed NNC2 (Fig. 8) was rather simple and the training data were over-fitted to NNC2; thus, Pc was lower because of the lack of the generalization capability.

Pcs for TCPI = 1.001 seconds were much higher for both classifiers than for TCPI = 0.0385 seconds. For NNC1, Pcs of f2 + f3, f2 + f6, and f3 + f7 were approximately 100% for all SNRs, and f3 + f13 was sensitive to noise. For NNC2, Pcs of f1 + f4, f1 + f20, f4 + f15, f4 + f19, and f4 + f20 were slightly lower than those of NNC2 at SNR ≥ −10 dB but close to 100%. The reason for the improvement was that for TCPI = 1.001 seconds, the features f2 and f7 for NNC1 and the features f1, f4, f19, and f20 better represented the periodicity of MD than that at TCPI = 0.0385 seconds.

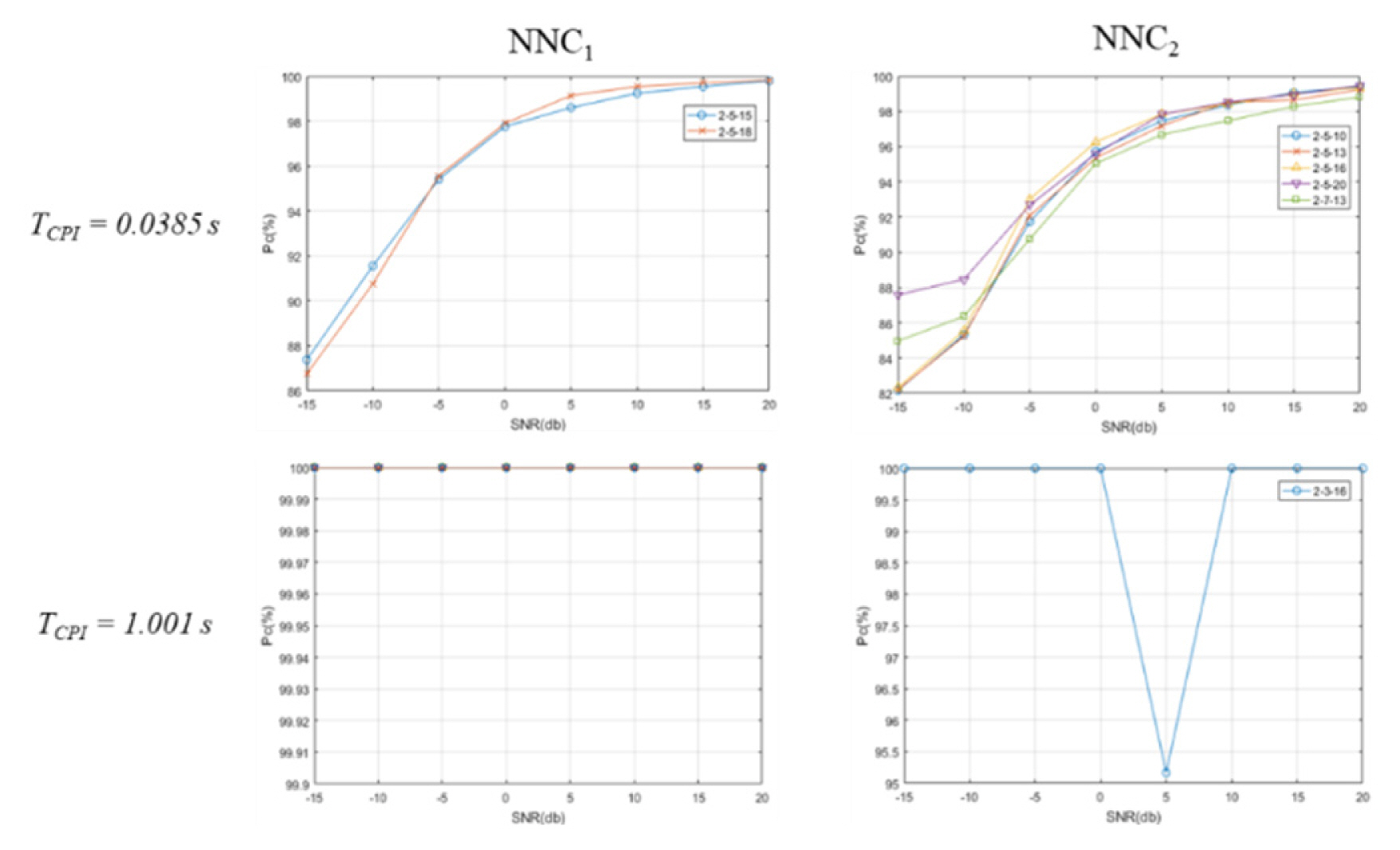

Combinations of three features increased the Pc value compared to those using combinations of two features, so the requirement to sift the classification results was changed. For TCPI = 0.0385 seconds, the best five combinations that satisfied Pc ≥ 90% for SNR = −10 dB and Pc ≥98% for SNR = 20 dB were displayed (Fig. 18). For TCPI = 1.001 seconds, the combinations for NNC1 that yielded Pc = 100% for all SNRs were used, and for NNC2, the combinations that yielded Pc ≥ 95% for all SNRs were used.

At TCPI = 0.0385 seconds Pc improved only slightly at low SNRs because of the poor representation of the target by the features that exploit periodicity. Using NNC1, f2 + f5 + f15 and f2 + f5 + f15 satisfied the requirement; using NNC2, f2 + f5 + f10, f2 + f5 + f13, f2 + f5 + f16, f2 + f5 + f20, and f2 + f7 + f13 satisfied the requirement. Pcs of NNC1 were slightly higher than those of NNC2, although the number of feature combinations for NNC1 was smaller than that for NNC2. For both classifiers, features f2 and f5 worked well because of the zero–nonzero MD relationship (Section III-2-1).

For TCPI = 1.001 seconds, 23 feature combinations for NNC1 yielded Pcs = 100% for all SNRs: f2 + f3 + f7, f2 + f3 + f9, f2 + f3 + f10, f2 + f3 + f12, f2 + f3 + f13, f2 + f3 + f14, f2 + f3 + f15, f2 + f3 + f16, f2 + f3 + f18, f2 + f3 + f19, f2 + f3 + f20, f2 + f3 + f21, f2 + f7 + f9, f2 + f7 + f10, f2 + f7 + f12, f2 + f7 + f13, f2 + f7 + f16, f2 + f7 + f21, f2 + f9 + f13, f2 + f10 + f13, f2 + f13 + f16, f2 + f13 + f21, f5 + f6 + f7, f5 + f6 + f10, f5 + f6 + f12, f5 + f6 + f13, f5 + f6 + f16, f6 + f10 + f12, f6 + f10 + f16, and f6 + f10 + f21. The increased observation time made the features related to the horizontal component work very well, and the increased dimension provided additional information to separate the two classes further. For NNC2, f2 + f3 + f16 satisfied the requirement; at SNR = 5 dB, Pc was only 95.2% due to the lack of generalization capability, so further study is required to achieve high Pc.

This paper proposes efficient features to classify drones and birds by using an FMCW radar. We conducted simulations to evaluate the effectiveness of each feature. The radar signal was constructed using the analytically known RCS of the drone blade and bird wing. A training database that considered flight scenarios was constructed to reduce the required memory space and computation time. The simulation results suggested that features that represent the vertical component of the MD image can be used regardless of the observation time, but those that represent the horizontal component of the MD image are only effective if the observation is extended. For a target that had v and a, Pc decreased considerably, so the effects of v and a should be removed before the features are extracted. The proposed features were also robust to the existence of multiple targets, yielding a small decrement in Pc. High Pc values were obtained by combining the features; as the observation time increased, the discriminant ability of the features related to the horizontal axis increased, so the improvement in Pc increased. NNC1 yielded a higher Pc than NNC2, which is attributable to the simple structure of the neural network. Further studies should be conducted to increase the number of neurons or use convolutional neural network structures.

The classification results were obtained assuming the specifications of a radar system that we are currently developing. Therefore, noise, clutter, and antenna beam shape might make the modeled radar signal differ from the measured signal. The blade and wing models used here are only one of the wide range of models. The materials that constitute the blade might affect its RCS. Birds have wings of many shapes and flap them at a range of frequencies. Currently, we are conducting experiments to measure the MD signal of real flying drones and birds and to remove clutter from the measured signal. Further research on classification performed using the measured signal will be conducted, and used to improve the features and the classification methods. This work was supported by the Institute of Information & communications Technology Planning & Evaluation (IITP) grant funded by the Korea government (MSIT) (No. 2018-0-00197, Development of ultra-low power intelligent edge SoC technology based on lightweight RISC-V processor).

Acknowledgments

This research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Science, ICT & Future Planning (No. 2018R1D1A1B07044981). This research was supported by the National Research Foundation (NRF), Korea, under project BK21 FOUR (Smart Robot Convergence and Application Education Research Center).

Table 1

Features proposed in this paper

References

1. P Tait, Introduction to Radar Target Recognition. London, UK: The Institution of Engineering and Technology, 2005.

2. SH Park, MG Joo, and KT Kim, "Construction of ISAR training database for automatic target recognition," Journal of Electromagnetic Waves and Applications, vol. 25, no. 11–12, pp. 1493–1503, 2011.

3. SK Han, HT Kim, SH Park, and KT Kim, "Efficient radar target recognition using a combination of range profile and time-frequency analysis," Progress in Electromagnetics Research, vol. 108, pp. 131–140, 2010.

4. VC Chen, F Li, SS Ho, and H Wechsler, "Micro-Doppler effect in radar: phenomenon, model, and simulation study," IEEE Transactions on Aerospace and Electronic Systems, vol. 42, no. 1, pp. 2–21, 2006.

5. T Thayaparan, S Abrol, E Riseborough, LJ Stankovic, D Lamothe, and G Duff, "Analysis of radar micro-Doppler signatures from experimental helicopter and human data," IET Radar, Sonar & Navigation, vol. 1, no. 4, pp. 289–299, 2007.

6. JH Jung, U Lee, SH Kim, and SH Park, "Micro-Doppler analysis of Korean offshore wind turbine on the L-band radar," Progress in Electromagnetics Research, vol. 143, pp. 87–104, 2013.

7. JH Jung, KT Kim, SH Kim, and SH Park, "Micro-Doppler extraction and analysis of the ballistic missile using RDA based on the real flight scenario," Progress in Electromagnetics Research M, vol. 37, pp. 83–93, 2014.

8. L Liu, D McLernon, M Ghogho, W Hu, and J Huang, "Ballistic missile detection via micro-Doppler frequency estimation from radar return," Digital Signal Processing, vol. 22, no. 1, pp. 87–95, 2012.

9. J Li and H Ling, "Application of adaptive chirplet representation for ISAR feature extraction from targets with rotating parts," IEE Proceedings-Radar, Sonar and Navigation, vol. 150, no. 4, pp. 284–291, 2003.

10. L Stankovic, I Djurovic, and T Thayaparan, "Separation of target rigid body and micro-Doppler effects in ISAR imaging," IEEE Transactions on Aerospace and Electronic Systems, vol. 42, no. 4, pp. 1496–1506, 2006.

11. Q Zhang, TS Yeo, HS Tan, and Y Luo, "Imaging of a moving target with rotating parts based on the Hough transform," IEEE Transactions on Geoscience and Remote Sensing, vol. 46, no. 1, pp. 291–299, 2008.

12. A Ghaleb, L Vignaud, and JM Nicolas, "Micro-Doppler analysis of wheels and pedestrians in ISAR imaging," IET Signal Processing, vol. 2, no. 3, pp. 301–311, 2008.

13. BR Mahafza, Radar Systems Analysis and Design Using MATLAB. Boca Raton, FL: CRC Press, 2000.

14. S Park, J Jung, S Cha, S Kim, S Youn, I Eo, and B Koo, "In-depth analysis of the micro-Doppler features to discriminate drones and birds," In: Proceedings of 2020 International Conference on Electronics, Information, and Communication (ICEIC); Barcelona, Spain. 2020, pp 1–3.

15. RO Duda, PE Hart, and DG Stork, Pattern Classification. 2nd ed. New York, NY: John Wiley & Sons Inc, 2001.

16. CC Aggarwal, Neural Networks and Deep Learning: A Textbook. New York, NY: Springer, 2018.

17. A Meta, P Hoogeboom, and LP Ligthart, "Signal processing for FMCW SAR," IEEE Transactions on Geoscience and Remote Sensing, vol. 45, no. 11, pp. 3519–3532, 2007.

18. S Qian and D Chen, Joint Time-Frequency Analysis: Methods and Applications. Upper Saddle River, NJ: Prentice-Hall, 1996.

19. S Qian, Introduction to Time-Frequency and Wavelet Transforms. Upper Saddle River, NJ: Prentice-Hall, 2002.

Biography

Se-Won Yoon received his B.S. and M.S. degrees in electronic engineering from Pukyong National University, Busan, Korea, in 2017 and 2019, respectively, where he is currently working toward the Ph.D. degree in electronic engineering. His research interests are in the areas of radar target imaging and recognition, radar signal processing, target motion compensation, pattern recognition using artificial intelligence, and RCS prediction.

Biography

Soo-Bum Kim received B.S. and Ph.D. degrees in electronics engineering from Pohang University of Science and Technology (POSTECH), Pohang, Korea, in 1997 and 2002, respectively. He was with the Electronics and Telecommunications Research Institute (ETRI), ISR Center of LIG Nex1, and Digitron Co. Ltd., as a senior member of the research staff, from 2003 to 2014. Since 2015, he has been the CEO of RADSYS Co. Ltd., Daegu, Korea. His research interests include the system design and signal processing of radar and SAR systems.

Biography

Joo-Ho Jung received his B.S. degree in the Korea Air Force Academy, Cheongju, Korea, in 1991 and his M.S. and Ph.D. degrees in electronic engineering from Pohang University of Science and Technology (POSTECH) in 1998 and 2007, respectively. From 2008 to 2012, he was a lieutenant colonel in the Defense Acquisition Program Administration, Seoul, Korea. In 2012, he joined the Department of Electrical Engineering, POSTECH, as a research associate professor, and in 2015, he was with Unmanned Technology Research Center, Korea Advanced Institute of Science and Technology, Daejeon. In 2020, he joined Kookmin University, Seoul, as the Director of EM Technology Research Center. His research interests are radar target recognition, radar signal processing, and electromagnetic analysis in wind farms by various military radars.

Biography

Sang-Bin Cha received his B.S. and M.S. degrees in electronic engineering from Pukyong National University, Busan, Korea, in 2017 and 2019, respectively, where he is currently working toward the Ph.D. degree in electronic engineering. His research interests are in the areas of radar target imaging and recognition, radar signal processing, target motion compensation, pattern recognition using artificial intelligence, RCS prediction, and electromagnetic analysis of the windfarm.

Biography

Young-Seok Baek received his B.S., M.S. degrees in electronic engineering from Hanyang University, Seoul, Korea, in 1985 and 1987, respectively. In 1989, he joined the Electronics and Telecommunications Research Institute (ETRI), Daejeon, Korea, where he is currently a senior engineer. His research interests are CAD in semiconductor, digital design and verification methodology, wireless communication, face recognition, and radar signal processing.

Biography

Bon-Tae Koo received his M.S. degrees in electrical engineering from Korea University, Seoul, Korea, in 1991. In 1991, he was with the System Semiconductor Division, Hyundai Electronics Company, Ichon, Korea, where he was involved in the chip design of video codec and DVB modem. From 1993 to 1995, he was with HEA, San Jose, USA, where he was responsible for the design of MPEG2 video codec chips. From 1996 to 1997, he was with TVCOM, San Diego, USA. In 1998, he joined Dongbu Electronics as a team leader with the system semiconductor Lab and focused on the methodology of semiconductor chip design. In 1999, he joined the Application SoC team, ETRI, where he is currently a team leader and his research activities included the chip design of MPEG4 video, T-DMB receiver, LTE femtocell modem, and DSP processor. His research activities focused on millimeter-wave AI radars.

Biography

In-Oh Choi received his B.S. and M.S. degrees in electronic engineering from Pukyong National University, Busan, Korea, in 2012 and 2014, respectively, and his Ph.D. degree in electronic engineering from Pohang University of Science and Technology (POSTECH), Pohang, Korea, in 2019, respectively. From 2019 to 2021, he was a senior researcher with the Agency for Defense Development. In 2021, he joined the faculty of the Department of Electronics and Communications Engineering, Korea Maritime & Ocean University, Busan, Korea, where he is currently a assistant professor. His current research areas of interest include micro-Doppler analysis, ballistic target discrimination, vital sign detection, automotive target recognition, and calibration of polarimetric synthetic aperture radar.

Biography

Sang-Hong Park received his B.S., M.S., and Ph.D. degrees in electronic engineering from Pohang University of Science and Technology (POSTECH), Pohang, Korea, in 2004, 2007, and 2010, respectively. In 2010, he was a Brain Korea 21 Postdoctoral Fellow at the Electromagnetic Technology Laboratory, POSTECH. In 2010, he joined the faculty of the Department of Electronics Engineering, Pukyong National University, Busan, Korea, where he is currently a professor. His research interests are radar target imaging and recognition, radar signal processing, target motion compensation, and radar cross-section prediction.

- TOOLS

- Related articles in JEES

-

Human Identification by Measuring Respiration Patterns Using Vital FMCW Radar2020 October;20(4)